Scale

Deploy your transformations across the enterprise.

Your AI solution operates on a restricted perimeter. It is now necessary to deploy it throughout the organization, without multiplying costs, without losing performance and without spending two years doing so.

+150 companies supported

— 5/5 Google reviews

Problems

The problems you are having

Your AI solution works on a limited scope, but each expansion attempt starts from scratch. Costs are multiplying, data quality varies, and governance is not keeping up.

01

Your pilot works, but he remains confined to a team.

The model runs in production on a limited perimeter. Everyone is convinced of its value. But as soon as we talk about deploying it to other entities, subsidiaries or countries, no one knows how to go about it.

02

Each new deployment starts from scratch.

The architecture was not designed for multi-entities. Each extension requires an effort that is almost equivalent to the initial development. Costs and deadlines explode with each iteration.

03

Data quality varies from entity to entity.

The model performs well on pilot team data, but data from other entities is structured differently, incomplete, or of varying quality. Performance is deteriorating at scale.

04

Governance does not keep up with growth.

As the number of users and models increases, the more critical issues of compliance, traceability, and control become. And no one structured that.

Answer

Our approach

We work both in Consulting and in Structured Projects with a commitment to results.

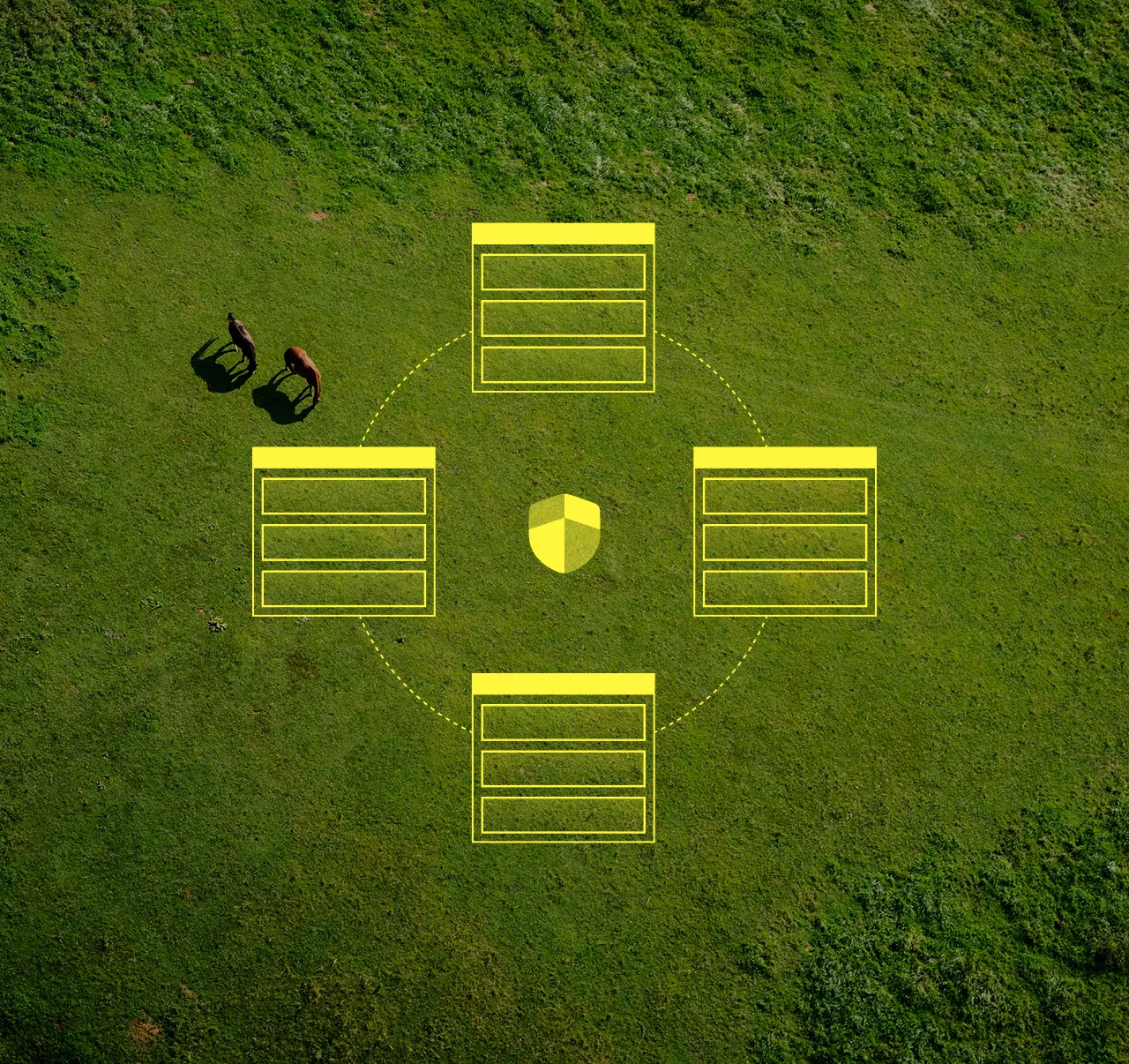

Scalability audit

We assess existing architecture, data flows, and business processes to identify what fits at scale and what needs to be redesigned. No massive deployment on fragile foundations.

Industrialization of infrastructure

We adapt the technical architecture to support scaling: automating pipelines, standardizing models, setting up MLOps and multi-entity deployment environments.

Harmonization of data

We structure data repositories, standardize formats across entities, and implement the quality controls necessary for models to perform consistently everywhere.

Progressive deployment and governance

We deploy entity by entity, with a governance framework that grows with the scope: access rights, traceability of decisions, performance monitoring by model and by entity.

Benefits/Impacts

What you gain

A clear vision of your AI use cases, a numerical roadmap and redesigned processes to exploit artificial intelligence by design.

01

Marginal deployment costs are falling sharply. Each new connected entity costs a fraction of the first thanks to an industrialized architecture.

We automate pipelines, standardize models, and set up multi-entity deployment environments. What took months for the pilot takes weeks for each subsequent expansion.

02

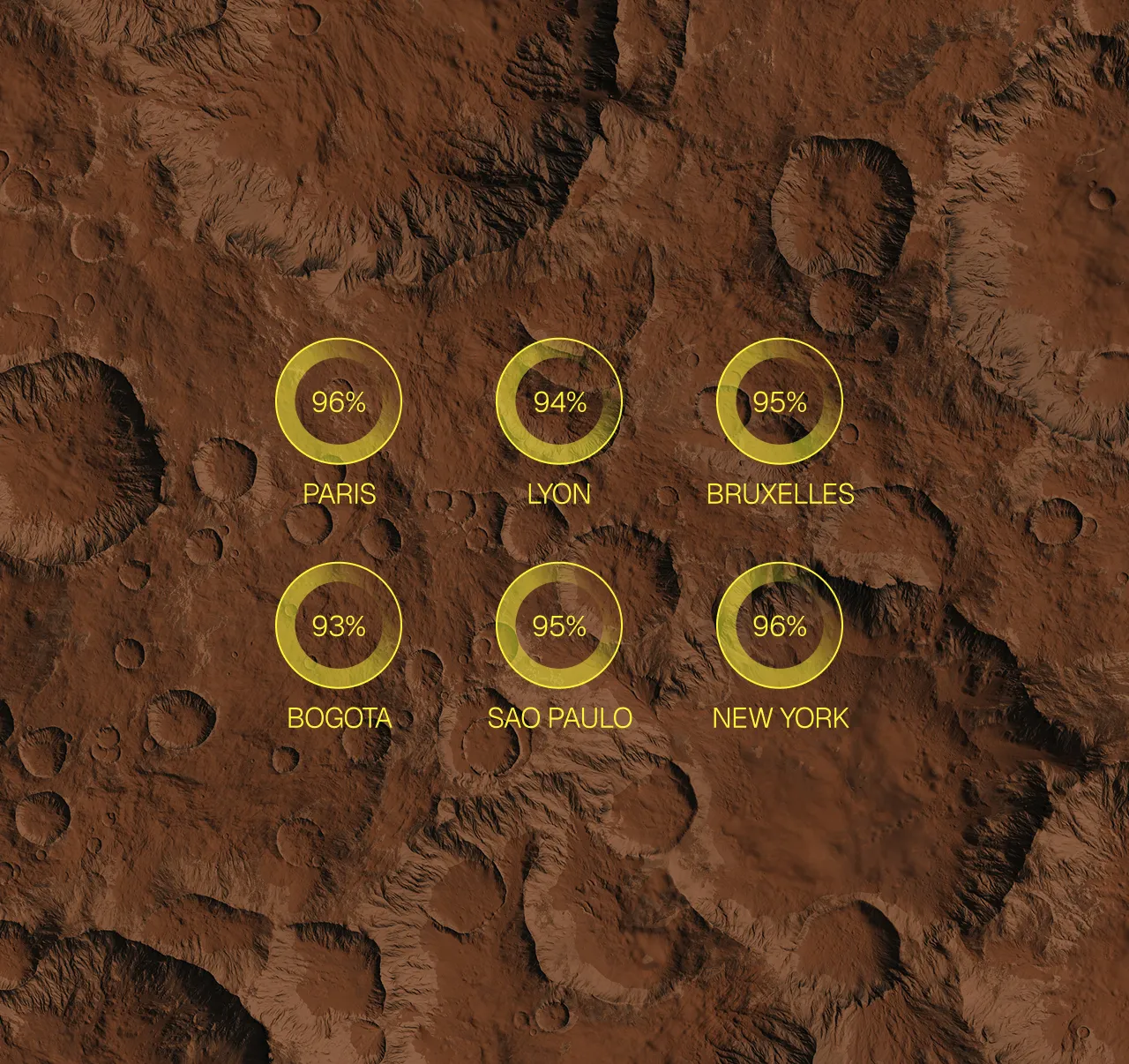

Consistent performance across the entire perimeter. The models work just as well in the Lyon branch as in the Brussels branch.

We harmonize data repositories, standardize formats across entities, and deploy the necessary quality controls to ensure that each model performs reliably, regardless of the local context.

03

Governance that holds up. Regulatory compliance, traceability and monitoring are integrated from the start, not added afterwards.

As the scope grows, compliance and control issues become more critical. We deploy a governance framework that evolves with the number of users, models, and entities.

04

Autonomy of local teams. Each entity can use AI systems independently, without relying on a central team for each action.

Training of local teams, adapted documentation and identification of internal referents for each wave of deployment. The objective: for each entity to be operational without daily support tickets.

05

A return on investment that is multiplying. The value created by the initial pilot is replicated throughout the organization, transforming local success into a global competitive advantage.

The Scale is the moment when the ROI changes dimensions. A model that saved €200K on a single perimeter generates several million once deployed across the group.

Your questions, our answers

All the answers to understand our approach, how we work and what you can expect from our collaboration.

What is Scale AI in business?

Scaling an AI consists in deploying a solution that works on a pilot perimeter to all entities, subsidiaries or countries of a group. The Diametral Scale industrializes the infrastructure, harmonizes the quality of data between entities and establishes scalable governance to prevent each deployment from scratch.

How to deploy an AI at the scale of all countries?

Diametral deploys AI at scale in three stages: scalability audit of the pilot architecture, standardized MLOps industrialization, then gradual rollout by entity with a common governance framework. This approach strongly divides the cost of deployment per new subsidiary and guarantees consistent performance.

Why does scaling increase costs?

Scaling explodes costs when the pilot is not designed for scale: each entity redevelops its connectors, multiplies models, and duplicates cloud resources. Diametral deals with this problem from the Build phase by imposing a shared architecture, a unique feature store and standardized CI/CD pipelines.

What is MLOps on a large scale?

Large-scale MLOps refers to the set of practices that make it possible to deploy and monitor dozens or hundreds of AI models in parallel. Diametral equips your teams with a centralized model registry, automated drift monitoring and multi-environment CI/CD to maintain control over a growing fleet.

How to guarantee data governance during scaling?

Data governance in the Scale phase is based on three pillars: a unique quality framework applied to each entity, a shared data catalog and a coherent access policy between countries. Diametral is gradually deploying this framework to avoid operational disruptions and ensure AI Act and RGPD compliance at the group level.

contact

Ready to go from pilot to corporate standard?

Describe your current context. A Diametral expert will work with you to assess the shortest path to scale deployment.